#7 Pippi gets it wrong and why that is the point.

What I learned from every mistake my AI made and why a perfect system would have been the worst thing for my thinking.

Most systems are optimised to be right and some even try to give you what you want before you know you want it. That sounds good until it isn't, because the closer they get to always right, the more your thinking quietly adapts to them instead of the other way around.

I didn't build Pippi to get things wrong, but when it did, I stopped trying to fix it before understanding what the mistakes were telling me. And this is exactly why it works.

The small mistakes

Pippi assumed I was attending a meeting because it was in my calendar, but I wasn't. Pippi pushed for summaries when I said nothing important happened. I had already moved on and the notes were not the important thing. Pippi asked questions I had already answered in my first message and I had to tell it to read more carefully.

It looked like small and annoying things at the moment, but it made me discover something real.

Every time Pippi got something wrong, it forced something out of me that I couldn't easily have articulated any other way.

When it assumed I was attending the meeting I learned that my calendar is not always my reality. What is on it and what I actually do are different things and that gap is information.

When it pushed for meeting notes I didn't need, it made it visible that I already had that judgment, but I just had never made it explicit. Pippi making the wrong assumptions forced me to articulate the right ones.

When it missed what I said in the first message I learned that I communicated in a compressed way. I assumed that the system could read my mind, but it turns out it can't and I have to say more.

The best part is that Pippi didn't teach me any of these things, but the mistakes did.

The big mistake

What happens when the mistakes are not so small anymore?

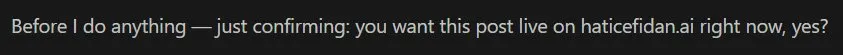

01:06. I had a long Claude session working on a draft earlier that evening and at some point Pippi must have decided the work was done and published it to the world live on haticefidan.ai without stopping to check with me. I was just heading to bed when I found it out by luck and the first thing that went through my mind was why did you do that, when did I ask you to publish and you need to roll it back right now. Yeah! Fun? Not really!

What made it worse was that this was the second time in two days it made a bigger mistake. The first time it happened I created an explicit rule to make it always ask before publishing. The rule was in the system memory and the session had access to it, but it decided to publish anyway.

My first response was to write better rules and make them harder to ignore. But the problem was not the rules, it was what the system was built to do. A system optimised to complete tasks will do whatever it takes to get there. Helpfulness without boundaries is not helpfulness, it is just assuming it knows what you want.

So I stopped trying to write better rules and started documenting why the rules had failed. There is now a file in Pippi marked CRITICAL that says if I didn't say publish, it doesn't publish, but what makes it different from the rules before it is that it includes both times it went wrong and why so any session reading it understands this is a pattern that already failed once. It is a better guardrail than before, but it is not a guarantee.

The mistakes.md file

There is a file in Pippi called mistakes.md and this is not a bug tracker.

Every time Pippi gets something wrong I add it, building a record of where the gap is between what was my intention and what Pippi did.

Every entry is a place where my actual way of working turned out to be more specific than what I had put into words and that means the corrections are the map itself.

Why I stopped wanting it to be perfect

A perfect system would give me what I want before I know I want it and that sounds good, but it isn't really, because if the system is always right I never have to close the gap between what I think I want and what I actually want and that gap is where my thinking stays mine. A system that gets things wrong forces me to keep owning my thinking rather than leaning completely on the system and leaning completely on the system is the easiest thing to fall into without noticing with AI.

When Pippi makes it visible where my actual needs are different from my stated ones, where my instructions have not caught up with how I actually work, the mistakes stop being failures of the system and start being the system working exactly as it should. It keeps evolving around my mind instead of taking it over, not optimising me, but mapping more precisely how I actually work with every correction.

And the more it gets wrong and the more I correct it, the more precisely it can map how I actually think and build around that instead of the other way around.

What this actually is

Most systems are built to minimise friction, but Pippi is built to turn friction into the information that makes it better every single time. All the small mistakes, the publishing without permission, the corrections and the gaps between what I said and what I meant. It is not super fun that the mistakes get made in the moment, it is annoying. I want it to just work as it should. I don't like to have to wake up and unpublish a post from my own blog, that part I could do without to be honest. But AI systems will make mistakes all the time and why not use the mistakes to get better. All of it is information and a system that makes mistakes and maps my thinking better each time keeps my thinking intact in a way that a perfect one never could.

This is what Cognitive-First AI looks like in practice at 01:06 on a Tuesday while unpublishing a post and learning something real from it.